How to make a serverless Flutter video sharing app with Firebase Storage, including HLS and client-side encoding

March 16, 2020

What we’re building

We’ll see how to build a flutter app for iOS/Android that allows users to view and share videos. In my previous post I showed how to do this with Publitio as our video storage API. In this tutorial we’ll use Firebase Cloud Storage to host the videos instead. We’ll also add client-side encoding and HLS support, so the client can stream the videos with adaptive bitrate.

The stack

- Flutter - For making cross platform apps.

- Firebase Cloud Firestore - For storing video metadata (urls) and syncing between clients (without writing server code).

- Firebase Cloud Storage - For hosting the actual videos.

- FFmpeg - For running client-side video encoding.

Why client-side encoding?

In most video workflows there will be a transcoding server or serverless cloud function, that encodes the video into various resolutions and bitrates for optimal viewing in all devices and network speeds.

If you don’t want to use a transcoding server or API (which can be quite pricey), and depending on the kind of videos your app needs to upload and view, you can choose to forego server side transcoding altogether, and encode the videos solely on the client. This will save considerable costs, but will put the burden of encoding videos on the clients.

Even if you do use some server-side transcoding solution, you’ll probably want to perform minimal encoding on the client. The raw video sizes (especially on iOS), can be huge, and you don’t want to be wasteful of the user’s data plan, or force them to wait for WiFi unnecessarily.

To see how to do server-side encoding using Cloud Functions and a dedicated API, read the next post

Video encoding primer

Here is a short primer on some terms we’re going to use.

X264 Codec / Encoder

This is the software used to encode the video into the H.264/MPEG-4 AVC format. There are many other codecs, but seeing as the H.264 format is currently the only one that is natively supported on both iOS and Android, this is what we’ll use.

Adaptive bitrate streaming

A method to encode the video into multiple bitrates (of varying quality), and each bitrate into many small chunks. The streaming protocol will allow the player to choose which quality the next chunk will be, according to network speed. So if you go from WiFi to cellular data, your player can adapt the bitrate accordingly, without reloading the entire video.

There are many streaming protocols, but the one that’s natively supported on iOS and Android is Apple’s HLS - HTTP Live Streaming. In HLS, the video is split to chunks in the form of .ts files, and an .m3u8 playlist file for pointing to the chunks. For each quality, or variant stream, there is a playlist file, and a master playlist to rule them all 💍.

Configurations

Installing flutter_ffmpeg

We’ll use the flutter_ffmpeg package to run encoding jobs on iOS/Android. flutter_ffmpeg requires choosing a codec package, according to what you want to use. Here we’ll use the min-gpl-lts package, as it contains the x264 codec, and can be used in release builds.

Add the following to your android/build.gradle:

ext {

flutterFFmpegPackage = "min-gpl-lts"

}And in your Podfile replace this line:

pod name, :path => File.join(symlink, 'ios')with this:

if name == 'flutter_ffmpeg'

pod name+'/min-gpl-lts', :path => File.join(symlink, 'ios')

else

pod name, :path => File.join(symlink, 'ios')Cloud Storage configuration

If you’ve already setup firebase in your project, as discussed in the previous post, then you just need to add the cloud_firestore package.

Now we need to configure public read access to the video files, so that we can access them without a token (see comment). For this example I’ve added no authentication to keep things simple, so we’ll allow public write access too, but this should be changed in a production app. So in Firebase Console, go to Storage -> Rules and change it to:

service firebase.storage {

match /b/{bucket}/o {

match /{allPaths=**} {

allow read;

allow write;

}

}

}Stages in client side video processing

This is the sequence of steps we’ll have to do for each video:

- Get raw video path from

image_picker - Get aspect ratio

- Generate thumbnail using ffmpeg

- Encode raw video into HLS files

- Upload thumbnail jpg to Cloud Storage

- Upload encoded files to Cloud Storage

- Save video metadata to Cloud Firestore

Let’s go over every step and see how to implement it.

Encoding Provider

We’ll create an EncodingProvider class that will encapsulate the encoding logic. The class will hold the flutter_ffmpeg instances needed.

class EncodingProvider {

static final FlutterFFmpeg _encoder = FlutterFFmpeg();

static final FlutterFFprobe _probe = FlutterFFprobe();

static final FlutterFFmpegConfig _config = FlutterFFmpegConfig();

...

}Generating thumbnail

We’ll use the encoder to generate a thumbnail which we’ll save later to Cloud Storage. We’re telling FFmpeg to take one frame (-vframes option) out of videoPath (-i option) with size of width x height (-s option). We check the result code to ensure the operation finished successfully.

static Future<String> getThumb(videoPath, width, height) async {

assert(File(videoPath).existsSync());

final String outPath = '$videoPath.jpg';

final arguments =

'-y -i $videoPath -vframes 1 -an -s ${width}x${height} -ss 1 $outPath';

final int rc = await _encoder.execute(arguments);

assert(rc == 0);

assert(File(outPath).existsSync());

return outPath;

} Getting video length and aspect ratio

We’ll use FlutterFFprobe.getMediaInformation and calculate the aspect ratio (needed for the flutter video player) and get video length (needed to calculate encoding progress):

static Future<Map<dynamic, dynamic>> getMediaInformation(String path) async {

return await _probe.getMediaInformation(path);

}

static double getAspectRatio(Map<dynamic, dynamic> info) {

final int width = info['streams'][0]['width'];

final int height = info['streams'][0]['height'];

final double aspect = height / width;

return aspect;

}

static int getDuration(Map<dynamic, dynamic> info) {

return info['duration'];

}Encoding

Now for the actual video encoding. For this example I used the parameters from this excellent HLS tutorial.

We’re creating two variant streams, one with 2000k bitrate, and one with 365k bitrate. This will generate multiple fileSequence.ts files (video chunks) for each variant quality stream, and one playlistVariant.m3u8 file (playlist) for each stream.

It will also generate a master.m3u8 that lists all the playlistVariant.m3u8 files.

static Future<String> encodeHLS(videoPath, outDirPath) async {

assert(File(videoPath).existsSync());

final arguments =

'-y -i $videoPath '+

'-preset ultrafast -g 48 -sc_threshold 0 '+

'-map 0:0 -map 0:1 -map 0:0 -map 0:1 '+

'-c✌0 libx264 -b✌0 2000k '+

'-c✌1 libx264 -b✌1 365k '+

'-c:a copy '+

'-var_stream_map "v:0,a:0 v:1,a:1" '+

'-master_pl_name master.m3u8 '+

'-f hls -hls_time 6 -hls_list_size 0 '+

'-hls_segment_filename "$outDirPath/%v_fileSequence_%d.ts" '+

'$outDirPath/%v_playlistVariant.m3u8';

final int rc = await _encoder.execute(arguments);

assert(rc == 0);

return outDirPath;

}Note: This is a simple encoding example, but the options are endless. For a complete list: https://ffmpeg.org/ffmpeg-formats.html

Showing encoding progress

Encoding can take a long time, and it’s important to show the user that something is happening. We’ll use FFmpeg’s enableStatisticsCallback to get the current encoded frame’s time, and divide by video duration to get the progress. We’ll then update the _progress state field, which is connected to a LinearProgressBar.

class _MyHomePageState extends State<MyHomePage> {

double _progress = 0.0;

...

void initState() {

EncodingProvider.enableStatisticsCallback((int time,

int size,

double bitrate,

double speed,

int videoFrameNumber,

double videoQuality,

double videoFps) {

if (_canceled) return;

setState(() {

_progress = time / _videoDuration;

});

});

...

super.initState();

}

_getProgressBar() {

return Container(

padding: EdgeInsets.all(30.0),

child: Column(

crossAxisAlignment: CrossAxisAlignment.center,

mainAxisAlignment: MainAxisAlignment.center,

children: <Widget>[

Container(

margin: EdgeInsets.only(bottom: 30.0),

child: Text(_processPhase),

),

LinearProgressIndicator(

value: _progress,

),

],

),

);

}Uploading the files

Now that encoding is done, we need to upload the files to Cloud Storage.

Uploading a single file to Cloud Storage

Uploading to Cloud Storage is quite straightforward. We get a StorageReference into the path where we want the file to be stored with FirebaseStorage.instance.ref().child(folderName).child(fileName). Then we call ref.putFile(file), and listen to the event stream with _onUploadProgress, where we update the _progress state field like we did with the encoding. When the uploading is done, the await taskSnapshot.ref.getDownloadURL() will return the url we can use to access the file.

Future<String> _uploadFile(filePath, folderName) async {

final file = new File(filePath);

final basename = p.basename(filePath);

final StorageReference ref =

FirebaseStorage.instance.ref().child(folderName).child(basename);

StorageUploadTask uploadTask = ref.putFile(file);

uploadTask.events.listen(_onUploadProgress);

StorageTaskSnapshot taskSnapshot = await uploadTask.onComplete;

String videoUrl = await taskSnapshot.ref.getDownloadURL();

return videoUrl;

}

void _onUploadProgress(event) {

if (event.type == StorageTaskEventType.progress) {

final double progress =

event.snapshot.bytesTransferred / event.snapshot.totalByteCount;

setState(() {

_progress = progress;

});

}

}Fixing the HLS files

Now we need to go over all the generated HLS file (.ts and .m3u8), and upload them into the Cloud Storage folder.

But before we do, we need to fix them so that they point to the correct urls relative to their place in Cloud Storage.

This is how the .m3u8 files are created on the client:

#EXTM3U

#EXT-X-VERSION:3

#EXT-X-TARGETDURATION:3

#EXT-X-MEDIA-SEQUENCE:0

#EXTINF:2.760000,

1_fileSequence_0.ts

#EXT-X-ENDLISTNotice the line 1_fileSequence_0.ts. This is the relative path to the .ts chunk in the playlist. But when we upload this to a folder, it’s missing the folder name from the URL. It’s also missing the ?alt=media query parameter, that’s required to get the actual file from Firebase, and not just the metadata. This is how it should look like:

#EXTM3U

#EXT-X-VERSION:3

#EXT-X-TARGETDURATION:3

#EXT-X-MEDIA-SEQUENCE:0

#EXTINF:2.760000,

video4494%2F1_fileSequence_0.ts?alt=media

#EXT-X-ENDLISTSo we need a function for adding these two things to each .ts entry, and also to each .m3u8 entry in the master playlist:

void _updatePlaylistUrls(File file, String videoName) {

final lines = file.readAsLinesSync();

var updatedLines = List<String>();

for (final String line in lines) {

var updatedLine = line;

if (line.contains('.ts') || line.contains('.m3u8')) {

updatedLine = '$videoName%2F$line?alt=media';

}

updatedLines.add(updatedLine);

}

final updatedContents = updatedLines.reduce((value, element) => value + '\n' + element);

file.writeAsStringSync(updatedContents);

}Uploading the HLS files

Finally we’ll go through all the generated files and upload them, fixing the .m3u8 files as necessary:

Future<String> _uploadHLSFiles(dirPath, videoName) async {

final videosDir = Directory(dirPath);

var playlistUrl = '';

final files = videosDir.listSync();

int i = 1;

for (FileSystemEntity file in files) {

final fileName = p.basename(file.path);

final fileExtension = getFileExtension(fileName);

if (fileExtension == 'm3u8') _updatePlaylistUrls(file, videoName);

setState(() {

_processPhase = 'Uploading video file $i out of ${files.length}';

_progress = 0.0;

});

final downloadUrl = await _uploadFile(file.path, videoName);

if (fileName == 'master.m3u8') {

playlistUrl = downloadUrl;

}

i++;

}

return playlistUrl;

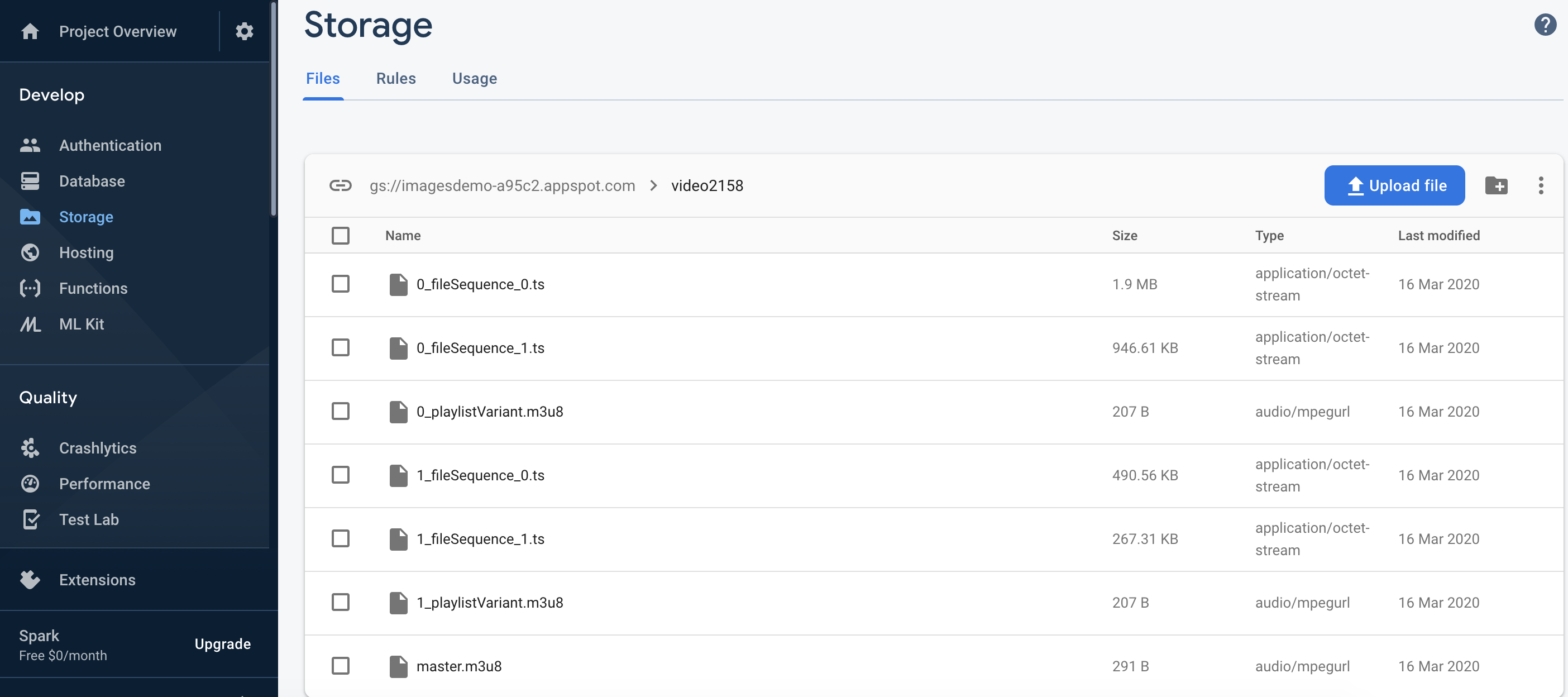

}This is how the uploaded files look like in Cloud Storage:

Saving metadata to Firestore

After we have the video’s storage url, we can save the metadata in Firestore, allowing us to share the videos instantly between users. As we saw in the previous post, saving the metadata to Firestore is easy:

await Firestore.instance.collection('videos').document().setData({

'videoUrl': video.videoUrl,

'thumbUrl': video.thumbUrl,

'coverUrl': video.coverUrl,

'aspectRatio': video.aspectRatio,

'uploadedAt': video.uploadedAt,

'videoName': video.videoName,

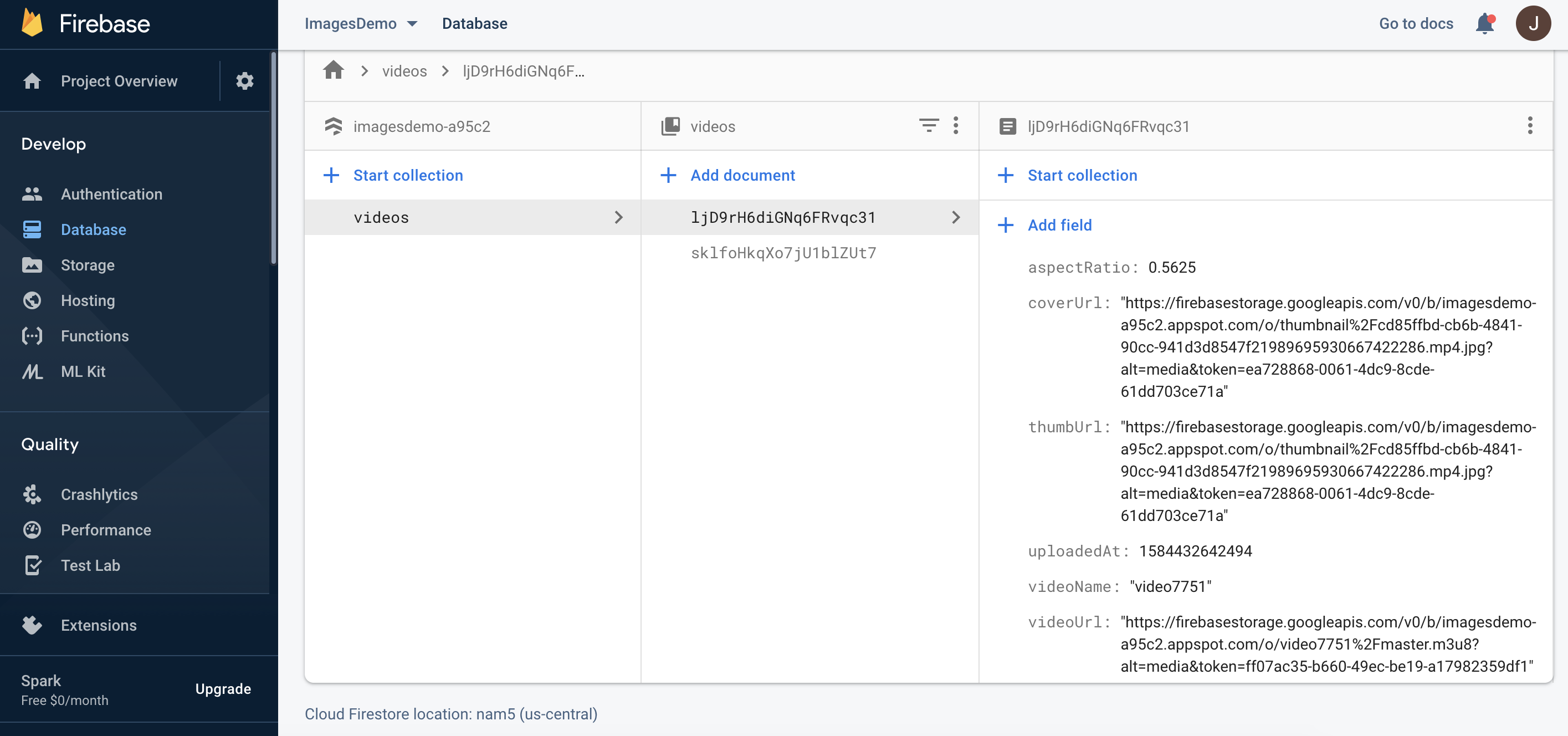

});This is how the video document looks like in Firestore:

Putting it all together

Now putting it all together in a processing function that goes through all the stages we’ve seen and updates the state to display the current job status (the rawVideoFile input comes from the image_picker output):

Future<void> _processVideo(File rawVideoFile) async {

final String rand = '${new Random().nextInt(10000)}';

final videoName = 'video$rand';

final Directory extDir = await getApplicationDocumentsDirectory();

final outDirPath = '${extDir.path}/Videos/$videoName';

final videosDir = new Directory(outDirPath);

videosDir.createSync(recursive: true);

final rawVideoPath = rawVideoFile.path;

final info = await EncodingProvider.getMediaInformation(rawVideoPath);

final aspectRatio = EncodingProvider.getAspectRatio(info);

setState(() {

_processPhase = 'Generating thumbnail';

_videoDuration = EncodingProvider.getDuration(info);

_progress = 0.0;

});

final thumbFilePath =

await EncodingProvider.getThumb(rawVideoPath, thumbWidth, thumbHeight);

setState(() {

_processPhase = 'Encoding video';

_progress = 0.0;

});

final encodedFilesDir =

await EncodingProvider.encodeHLS(rawVideoPath, outDirPath);

setState(() {

_processPhase = 'Uploading thumbnail to firebase storage';

_progress = 0.0;

});

final thumbUrl = await _uploadFile(thumbFilePath, 'thumbnail');

final videoUrl = await _uploadHLSFiles(encodedFilesDir, videoName);

final videoInfo = VideoInfo(

videoUrl: videoUrl,

thumbUrl: thumbUrl,

coverUrl: thumbUrl,

aspectRatio: aspectRatio,

uploadedAt: DateTime.now().millisecondsSinceEpoch,

videoName: videoName,

);

setState(() {

_processPhase = 'Saving video metadata to cloud firestore';

_progress = 0.0;

});

await FirebaseProvider.saveVideo(videoInfo);

setState(() {

_processPhase = '';

_progress = 0.0;

_processing = false;

});

}Showing the video list

We saw in the previous article how to listen to Firestore and display a ListView of videos. In short, we used snapshots().listen() to listen to the update stream, and ListView.builder() to create a list that reacts to changes in the stream, via the _videos state field.

For each video we display a Card containing a adeInImage.memoryNetwork showing the video’s thumbUrl, and next to it the videoName and uploadedAt field. I’ve used the timeago plugin to display the upload time in a friendly way.

_getListView() {

return ListView.builder(

padding: const EdgeInsets.all(8),

itemCount: _videos.length,

itemBuilder: (BuildContext context, int index) {

final video = _videos[index];

return GestureDetector(

onTap: () {

Navigator.push(

context,

MaterialPageRoute(

builder: (context) {

return Player(

video: video,

);

},

),

);

},

child: Card(

child: new Container(

padding: new EdgeInsets.all(10.0),

child: Stack(

children: <Widget>[

Row(

crossAxisAlignment: CrossAxisAlignment.start,

children: <Widget>[

Stack(

children: <Widget>[

Container(

width: thumbWidth.toDouble(),

height: thumbHeight.toDouble(),

child: Center(child: CircularProgressIndicator()),

),

ClipRRect(

borderRadius: new BorderRadius.circular(8.0),

child: FadeInImage.memoryNetwork(

placeholder: kTransparentImage,

image: video.thumbUrl,

),

),

],

),

Expanded(

child: Container(

margin: new EdgeInsets.only(left: 20.0),

child: Column(

crossAxisAlignment: CrossAxisAlignment.start,

mainAxisSize: MainAxisSize.max,

children: <Widget>[

Text("${video.videoName}"),

Container(

margin: new EdgeInsets.only(top: 12.0),

child: Text(

'Uploaded ${timeago.format(new DateTime.fromMillisecondsSinceEpoch(video.uploadedAt))}'),

),

],

),

),

),

],

),

],

),

),

),

);

});

}And there we have it:

Limitations

Jank

Video encoding is a CPU-intensive job. Because flutter is single-threaded, running ffmpeg encoding will cause jank (choppy UI) while it’s running. The solution is of course to offload the encoding to a background process. Regrettably, I haven’t found a way to do this easily with flutter_ffmpeg. If you have a working approach for long-running video encoding jobs in the background, please let me know! (To mitigate the jank effects, you can show an encoding progress bar, and not allow any other use of the UI until it’s done.)

Premature termination

Another problem with long encoding / uploading jobs, is that the OS can decide to close your app’s process when it’s minimized, before the job has completed. You have to manage the job status and restart / resume the job from where it stopped.

Public access to stored videos

HLS serving from Cloud Storage with this method requires public read access for all files. If you need authenticated access to the videos, you’ll have to find a way to dynamically update the .m3u8 playlist with the Firebase token each time the client downloads the file (because the token will be different).

Caching

The flutter video_player plugin doesn’t currently support caching. You can try to use this fork which supports caching (I use it in my app), but I haven’t tested it with HLS.

To use it, add this in pubspec.yaml:

video_player:

git:

url: git://github.com/syonip/plugins.git

ref: a669b59

path: packages/video_playerWrap Up

I think this is a good DIY approach to hosting videos, for two main reasons:

- Minimal amount of coding - there’s no server code to write or maintain.

- Very cheap - If you’re working on a side project and want to start with zero cost, you can. Firebase’s free plan has 1GB storage and 10 GB/month transfer limit, which is good to start with.

Thanks for reading! As always, full source code can be found on GitHub.

If you have any questions, please leave a comment!